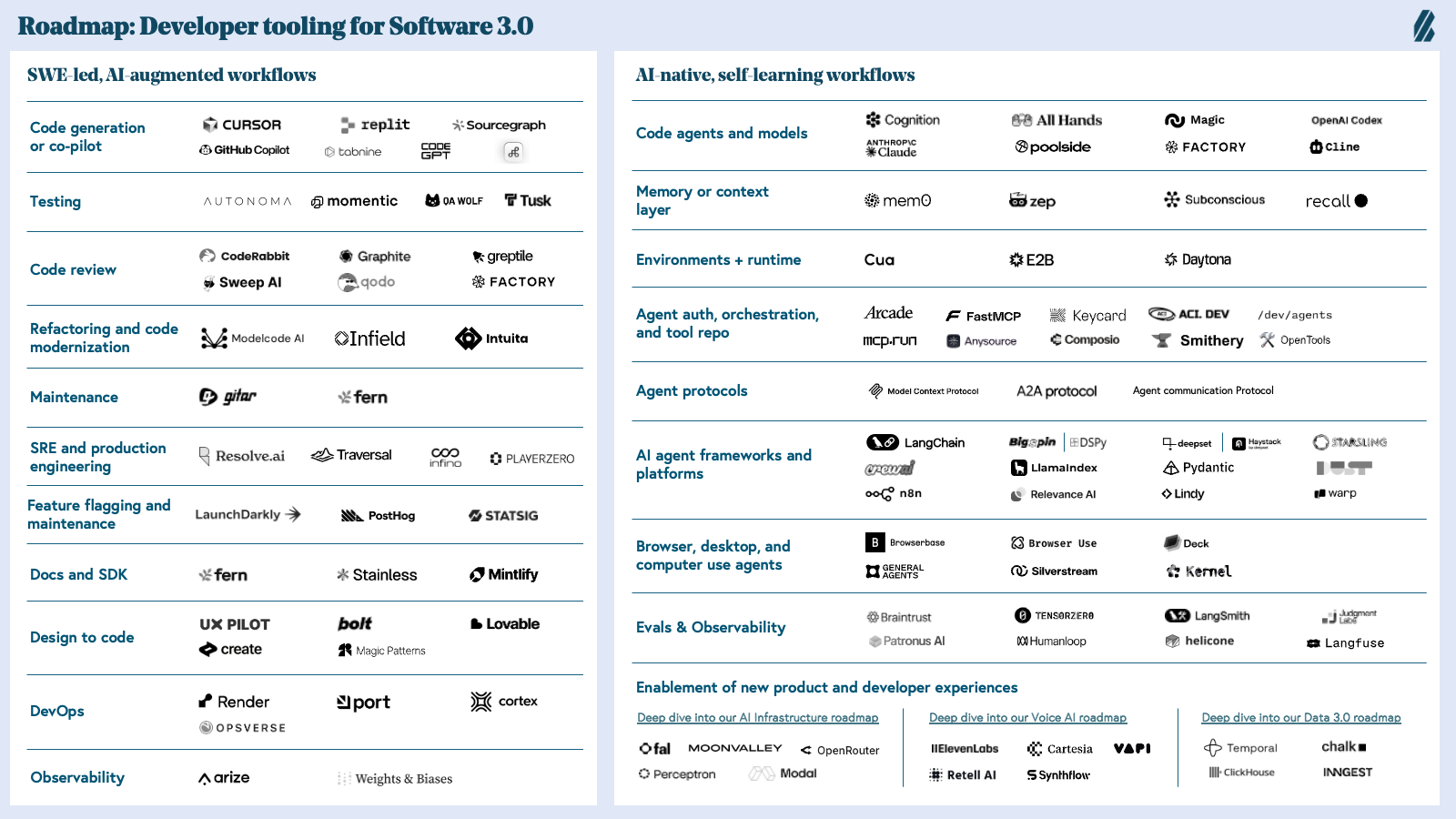

Roadmap: Developer Tooling for Software 3.0

From “Hello, World” to “Hello, AI”: Software engineering has leapt from human–AI collaboration to orchestration — and now toward full autonomy.

When GitHub Copilot previewed in June 2021—five months before ChatGPT sparked the broader AI revolution—it wasn't just another developer tool release. It was the first signal of Software 3.0: an era where natural language becomes the primary programming interface and AI agents don't just assist developers, they evolve into developers themselves.

At Bessemer, we've had a front-row seat to every major shift in developer tooling. From Twilio turning telecom into APIs, to Auth0 abstracting authentication complexity, to Zapier automating workflows (and unlocking the pre-vibe coder, “non-technical” user), to HashiCorp revolutionizing infrastructure as code—we've partnered with technical founders who transform developer pain points into platform opportunities. Companies like PagerDuty, Render, Fern, and LaunchDarkly didn't just ship better tools; they redefined how software gets made.

The developer economy has always been restless.

Innovation in the developer economy has always been restless. Languages rise and fall, framework preferences shift quickly, and developer love—once lost—rarely returns. But for years, these changes felt incremental: smoother CI/CD pipelines, cleaner API designs, faster deployment cycles. Meaningful improvements, but not entirely evolutionary. AI changed the rules entirely.

Coding proved early on to be a well-suited domain for AI—logical, structured, syntax-driven, with decades of open-source training data and established benchmarks for measuring quality. What started as a neat tool quickly became essential infrastructure. GitHub's annual revenue hit $2 billion in 2024, with Copilot driving over 40% of that growth. This is one proof point, but the trajectory is clear: we expect AI to write 95%+ of code by 2030 and already see this happening in many of our highest velocity companies.

The market has responded accordingly. Billions in venture capital have flowed into AI-powered developer tools, from coding assistants to autonomous debugging platforms. We've already seen blockbuster acquisitions reshape the competitive landscape—and this is still the early innings.

“Hello, World” meets “Hello, AI"

AI is ushering in Software 3.0 development, where natural language becomes the primary programming interface and models execute directly on instructions. In this paradigm shift, the fundamental principles of software development are changing—prompts are now programs, and LLMs function as a new type of computer.

This isn't just an incremental progression of developer tools. Software engineering is being radically reimagined from the ground up, with AI not just disrupting traditional workflows, but creating entirely new categories of developer platforms.

While much attention has focused on how AI augments existing developer workflows, we believe that is just the opening act of AI disruption in the developer economy. AI's transformative power has been so comprehensive that entirely new categories are emerging. Developers aren't simply using AI to enhance current practices—they're fundamentally changing how they engineer software as the nature of development itself evolves. We've already seen this transformation's first wave in core AI infrastructure. Now we're witnessing it reshape the broader developer workflow ecosystem:

Market map: Developer AI tooling and platforms

Deep dive into our roadmaps on AI Infrastructure, The Data 3.0 in the Lakehouse Era, and Voice AI, which are complementary to this Software 3.0 roadmap.

Five themes driving our investment strategies in developer AI tooling and platforms

Theme 1: Supercharging developer productivity through AI augmentation

We're finally realizing the dream of developers being able to hand-off mundane “grunt work” to AI, freeing up their time to focus on higher-level tasks. AI is automating the repetitive tasks that drain cognitive energy—debugging, code reviews, environment setup, incident response, and those endless low-level fixes that consume entire afternoons.

The shift is profound. Where developers once spent significant portions of their day on tedious maintenance work, testing cycles, and documentation, AI now tackles these time-sinks first and surfaces polished outputs for human review. This frees engineers to focus on what actually matters: architectural decisions, creative problem-solving, and high-impact feature development.

The performance gains can be staggering. Mean time to recovery (MTTR) can drop from days/hours to just minutes. Lead time for changes can compress significantly. Developer onboarding can shrink from months to days. These aren't marginal improvements—they're order-of-magnitude leaps that fundamentally change what small teams can accomplish.

Theme 2: Democratizing software development through AI

LLMs and emerging tooling are redefining who gets to build software by dismantling the technical barriers that once separated professional developers from everyone else. English is the hottest new programming language. Prompt-to-code and design-to-code platforms now enable individuals with zero traditional programming experience to build functional applications simply by describing what they want or uploading a mockup.

This shift fundamentally changes the ingredients for software innovation. Creativity, domain expertise, and product taste matter more than mastering syntax or memorizing frameworks. The best healthcare app might come from a doctor who understands patient workflows, not a developer who's memorized React patterns.

Meanwhile, the rise of agentic engineering is pushing us beyond the traditional human-in-the-loop model. Autonomous AI agents now manage complex workflows, orchestrate deployments, and identify and resolve bugs without constant oversight. These systems don't just assist—they execute.

Instead of requiring constant human oversight, platforms are enabling agents to handle complex engineering tasks, orchestrate deployments, and even identify and resolve bugs autonomously.

This dual movement — reducing technical barriers and increasing AI autonomy — can unlock software creation for a vastly broader community, turning developers into anyone who can articulate a vision and utilize AI tooling, fundamentally expanding the possibilities for innovation and redefining what it means to be a developer.

Theme 3: Next-generation tools and techniques for AI-native development

Just as Software 2.0 democratized web development through critical infrastructure pieces—Auth0 eliminated months of complex authentication work, Stripe abstracted payment complexity, and Twilio turned SMS integration from a telecom nightmare into a few lines of code—we're witnessing the emergence of similar foundational layers for AI-native development.

New essential parts of the stack include:

- Memory & Context Management: Tools like Mem0, Zep, Subconscious, and foundational model labs themselves are racing to address the limitations of stateless LLMs. Where developers once built custom vector databases and retrieval systems, "memory as a service" tools now provide plug-and-play memory layers that maintain conversation context, user preferences, and long-term learning—critical for any AI application that needs to feel intelligent beyond a single interaction.

- AI-Native Frameworks: Just as React transformed UI development, frameworks like LangChain, LlamaIndex, DSPy, and Crew are abstracting the complexity of prompt chaining, tool use, and multi-step reasoning. Developers no longer need to hand-roll retry logic, token management, or agent orchestration—they can focus on business logic while these frameworks handle the plumbing.

- Runtime & Deployment Infrastructure: Modal, fal, Replicate, and Fireworks are to AI what Vercel was to Next.js—removing the GPU procurement headache and cold-start problems that plague AI applications. They eliminate the DevOps bottleneck with a simple function call.

Software 2.0's killer insight was continuous deployment with safety nets and iterative learning. LaunchDarkly, for example, fundamentally changed how products were shipped—teams could ship daily, test with 1% of users, and roll back instantly. This tight feedback loop accelerated learning cycles from months to hours.

The AI-native equivalent is still being written, but the contours are clear. Where Software 2.0 companies asked "Is this feature working?", AI-native tools must answer "Is this prompt/model/chain producing accurate, safe, and useful outputs?" The challenge is exponentially more complex—we're not just tracking conversion rates but evaluating nuanced language understanding, factual accuracy, and alignment with user intent.

The emerging eval and observability landscape is converging on three critical capabilities:

- Prompt versioning as Feature Flags: Companies like Honeyhive and PromptLayer give their users the ability to A/B test prompt variations in production with automatic rollback on performance degradation.

- Continuous eval pipelines: Platforms such as Bigspin.ai provide not just pre-deployment testing but real-time production monitoring of model outputs against golden datasets and user feedback.

- Semantic metrics beyond traditional analytics: We’re moving from "click-through rate" to "helpfulness score" and "factual accuracy rate." Software 3.0 requires new tools such as Judgment Labs and techniques such as LLM-as-judge for high quality evals and metrics definition.

The winner in this space will transform AI development from "ship and pray" to confident, data-driven iteration. Unlike traditional testing, LLM behavior is non-deterministic—the platform must seamlessly bridge local development evals with production monitoring, enable hot-swapping of prompts and models without code deploys, and provide intelligent regression detection that catches when improvements in one use case degrade another.

Most critically, it must scale human-in-the-loop feedback efficiently—routing edge cases to expert reviewers and incorporating that signal into automated evals to create a flywheel of improving accuracy. We're still in the early innings, but the convergence of these building blocks will unlock the same step-function increase in development velocity we saw in Software 2.0. Except this time, we're not just changing pixels on a screen—we're fundamentally reimagining how software thinks and reasons.

Theme 4: Reimagining new engineering interactions in the AI era

If MCP (Model Context Protocol) emerges as the de facto standard, several important dynamics will likely follow. First, context itself will become both composable and portable, enabling information to move seamlessly across different systems and applications. This shift will give rise to a new era of context engineering, where shaping, routing, and curating context becomes a discipline in its own right—analogous to how data engineering evolved alongside databases.

Organizations will invest in pipelines, tooling, and governance models designed to optimize the quality, provenance, and timeliness of contextual inputs. Small differences in context assembly can yield significant performance gains for models, creating competitive advantages for teams that can most effectively orchestrate context across internal and external sources.

We also expect to see the emergence of business-centric MCP clients—tools built specifically for enterprise use cases and workflows, rather than being limited to developer-focused environments like IDEs. These clients will increasingly incorporate context engineering features out of the box: adaptive context windows, audit trails for decision-critical prompts, and automated context compression or expansion depending on the task.

In the long run, MCP's standardization could elevate context engineering from a behind-the-scenes task into a first-class product layer—where businesses differentiate not just on data or model access, but on their ability to engineer context that is richer, cleaner, and more actionable than their competitors.

Theme 5: "Agent experience" is the new "developer experience"

As AI agents increasingly take on responsibilities within traditional developer workflows, the very definition of a "user" of developer tools is undergoing fundamental transformation. AI coding agents are no longer merely subordinate co-pilots assisting human developers—they're beginning to take the wheel themselves, autonomously generating, modifying, testing, and deploying software.

This marks a paradigm shift where AI agents are emerging as "first-class" users of software, requiring tools that go far beyond traditional UX conventions built for human cognition and interaction. Instead of optimizing solely for "developer experience" (DX), we're now entering an era where tooling must also prioritize "agent experience" (AX).

We see startups embracing this shift by reimagining interfaces to be more legible, navigable, and controllable by AI agents, who parse code and interfaces differently from humans. Early signs of this transition include synthetic browsers tailored for agent exploration, agent-to-agent orchestration platforms, computer use interfaces that facilitate autonomous execution and system interaction, and documentation protocols optimized for agent discovery and access.

Simultaneously, functional areas like documentation and authentication are being restructured to be increasingly machine-readable. The best developer tools of tomorrow won't just serve humans—they'll support hybrid ecosystems where AI agents and developers collaborate, or even environments where AI agents operate entirely independently.

A generational opportunity to architect the foundational infrastructure that will power the next era of software innovation

We're witnessing the most fundamental transformation in software development since the move to cloud computing. The entire developer tooling landscape is undergoing complete replatforming. New categories are crystalling and traditional workflows are being rebuilt from first principles. Unlike previous technology transitions, this one compounds: AI tools create better AI tools, generating feedback loops that accelerate innovation at unprecedented speed.

The AI-driven disruption of the developer economy is only just beginning. We remain mindful that AI is not perfect. Significant work, especially in the last mile, remains before widespread enterprise adoption is possible to bridge critical gaps around areas such as privacy, monitoring, reliability, context windows limitations, and guard-railing. We remain highly optimistic that founders will be able to tackle these challenges with time.

The opportunity ahead is massive for builders in Software 3.0—not just for incremental productivity gains, but for fundamentally reimagining how software gets built, who builds it, and what becomes possible when development cycles compress from months to minutes. We're excited to support highly technical early-stage teams building in Software 3.0 and have already partnered with emerging leaders in this ecosystem. Janelle Teng, Lauri Moore, Lindsey Li, and Libbie Frost would love to hear from you if you are building in this space or have any feedback on this roadmap.