Perspective: Powering the next generation of data scientists

Data scientists are drivers of innovation and productivity for their teams—it’s about time they finally have better tools and platforms to support increasingly complex analyses.

In the late 2000s, analytics experts at LinkedIn and Facebook began to push the application of statistics to support the growth of digital businesses. What was once an emerging practice for companies—collecting and analyzing troves of data—became critical for organizations of all sizes, as leaders began to drive strategy based on the insights derived from complex analysis. Data science became the next frontier and a competitive business advantage. Naturally, the profession grew in popularity. For the past decade, these roles have been in high demand by employers, and today, the U.S. Bureau of Labor Statistics reports the field will grow about 28% through 2026.

However, while developer tools have vastly improved over the past five to ten years, data scientists haven’t seen similar advancements in tools and workflows.

As the modern cloud data stack continues to transform, we see the empowerment of data scientists to be one of the largest drivers of innovation and productivity over the next decade.

The work and friction of data science

Once data engineers take data in its rawest form and put it in a structured or semi-structured format (often in a data warehouse or data lake like Firebolt, Snowflake, or Delta Lake), the work of the data scientist begins.

Data scientists are responsible for transforming and interpreting data, often in a format that can be consumed by other business users. However, data scientists face a number of friction points across their work today, ranging from the technical to the organizational. For example, data scientists often struggle with transforming data in varied formats from different sources and processing increasingly large datasets. Cross-functional communication can also be a hurdle; teams need to receive the right data pipelines in order to collaborate with other team members and deliver on the right models, metrics, and insights.

Friction points are growing in number, scope, and complexity.

Friction points are growing in number, scope, and complexity as data science teams scale with organizations, the volume of accessible data increases, data structures become more varied in format, and business users request insights that require more complex computation.

Data science tools and workflows

Innovation is directly proportional to a team's ability to derive value from its data, yet data scientists are still some of the most under-resourced team members. As a result, there is a huge opportunity for the emerging products and companies targeting the data science personae to take this ever-growing and expanding budget and allow businesses to get the most out of the scarce data scientist resources.

Transformation Tools

Transformation is the first step after data engineers have moved data into a warehouse using an ELT tool. ELT (Extract, Load, Transform) represents a fundamental shift in data science wherein data is first extracted from a variety of different sources, loaded by data engineers using tools like Fivetran or Airbyte into a data warehouse like Snowflake, and then transformed by data scientists. The data transformation process often involves adding or deleting columns, organizing different columns into databases that are consumable by other users, and merging data from different sources together. Transformations are often performed using SQL, but data scientists can also work in Python to produce different types of operations.

In the transformation space, new tools allow data scientists to work in their preferred languages and frameworks (typically SQL and Python) to perform increasingly complex transformations on larger datasets. This means data scientists can rely less on data engineers to complete downstream tasks, such as the extract and load phase of the data pipeline.

One of the most popular transformation tools is the open-source project dbt, which also offers a commercial product that is a managed version of the open-source project. dbt enables SQL-fluent users to write queries and create transformation pipelines. Prior to dbt, less technical data scientists often had to collaborate with data engineers to run most transformations in a data warehouse. dbt also marries documentation, lineage, and testing with the codebase, making it easier for other reviewers of the code to understand the full history of development on a pipeline.

For complex transformations where data scientists and engineers are working closely with one another to wrangle raw data, dbt has emerged as the dominant independent framework for creating models. dbt performs transformations on data inside the data warehouse, which means that any tool including Looker can be used to access the transformed raw data.

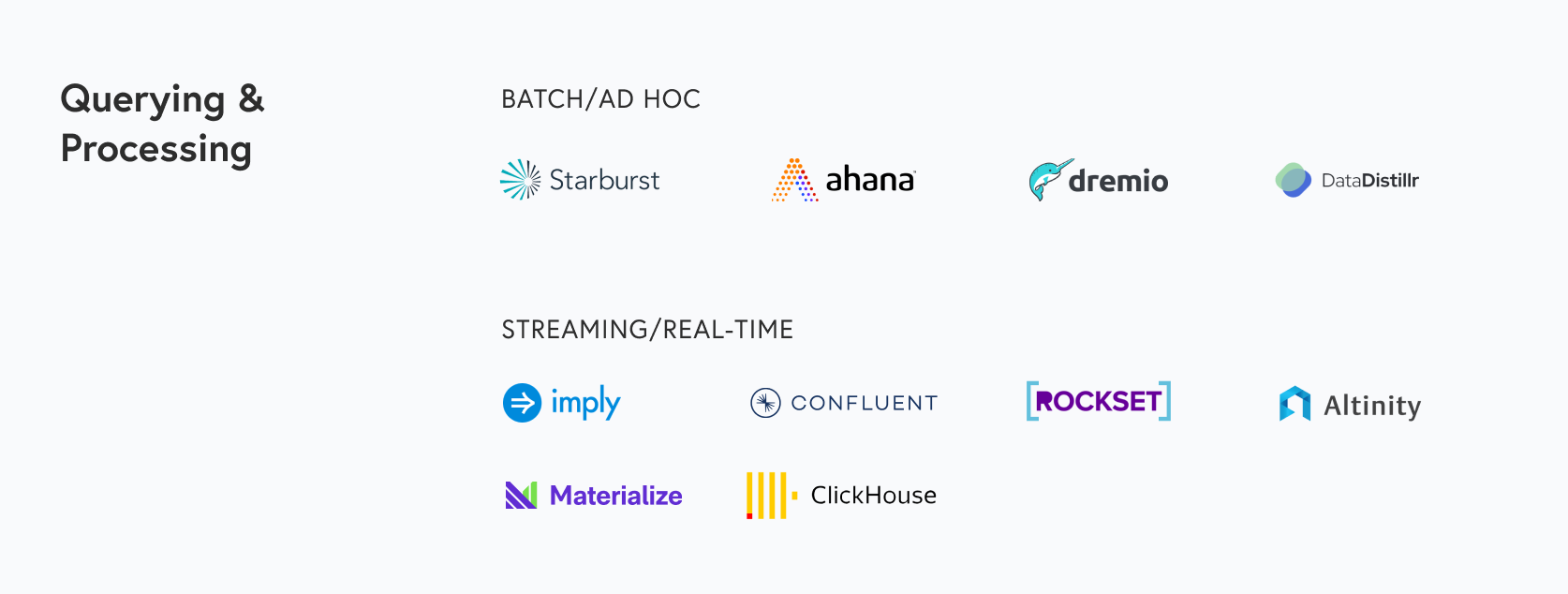

Querying and Processing

Once data is accessible either in more structured forms like relational databases or in a data lake, data scientists perform queries to retrieve specific sets of data needed for insights and business analytics.

Often queries can involve massive amounts of data, which require processing engines to help speed up and simplify the querying process. Processing engines for structured data include SparkSQL (focused on the Spark ecosystem), Apache Flink (for real-time data), kSQL, and more. These engines, whether real-time or batch, function by optimizing computational processes on data. These processes can include parallelizing data, which means chunking the data into separate dataframes and processing separately.

For querying data from multiple data sources, data scientists also have query engines in their toolkit. These engines allow data scientists to use SQL queries on disparate data silos, so that they can query the data where it already exists rather than having to move around massive amounts of data to run queries.

Distributed query engines like Apache Drill allow for a single query to merge data from various sources while performing optimizations that work best for each data store. Other popular distributed query engines include projects like Presto/Trino, on top of which companies like Ahana and Starburst offer managed cloud products, and Apache Pig. These distributed engines allow for querying of data directly on your data lake, which is the promise of the Lakehouse concept that continues to garner interest from the data community.

To optimize for processing and query times based on the type of data, data scientists often opt for specific types of databases that fit their use case. For example, for processing real-time data with a time component, many organizations are now choosing OLAP databases like Apache Druid. Druid enables fast querying of time-series data, as well as non-time-series data, that is either real-time or historical. Our portfolio company Imply Data offers an enterprise cloud version of Druid, in order to reduce some of the work required for management and deployment of an open-source database. Imply offers an end-to-end suite of tools from a compliance manager to connectors to visualization tools, to enable enterprises to utilize Druid for both business intelligence and operational intelligence use cases.

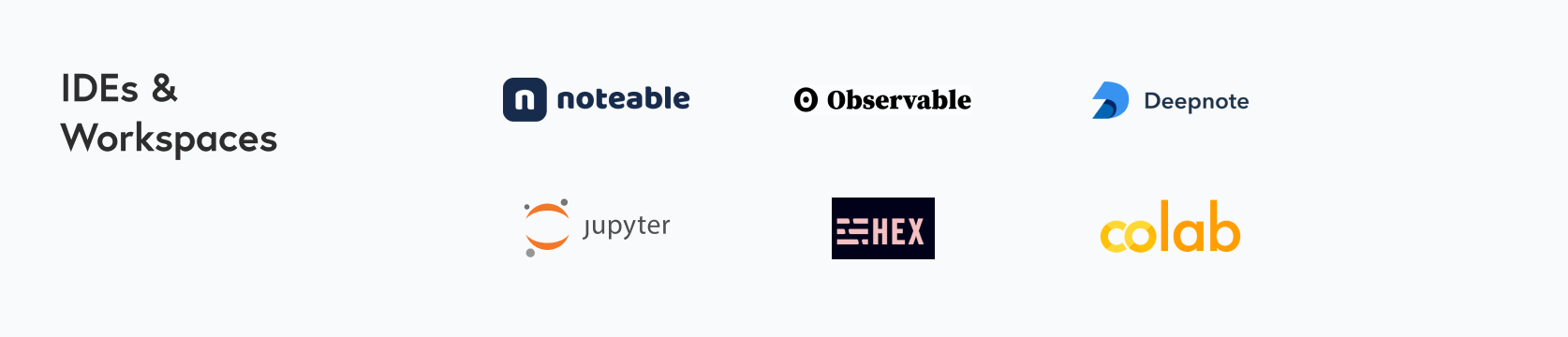

IDEs and Workspaces

With data preparation work completed and the right processing/querying infrastructure in place, data scientists can now begin to conduct analyses, build models and share their datasets. This piece of the workflow happens in integrated development environments (IDEs) and workspaces (often notebooks).

IDEs, which combine different automated application development tools into one interface, can serve as a fast way to test and debug code. For data science-specific IDEs like Anaconda’s Spyder or PyCharm, features often include code completion or specific debugging tools for Python models.

However, IDEs can be hard for users without a coding background to interpret because they are designed for users who can read and write code. Additionally, IDEs are not designed for team collaboration and lack features that allow multiple users to easily contribute to code. Therefore, IDEs are often substituted out in favor of notebooks. Notebooks are tools that allow data scientists to combine text that explains their thoughts and work, with code and graphs. Often a supplement to using an IDE for building models, notebooks help with more granular exploration of data while allowing data scientists to understand the data they are working with and share lessons with different stakeholders. Data scientists can also experiment with basic models in notebooks.

Once completed, data scientists can share these notebooks, and users can quickly read the context of an experiment, run the section of code, make changes, and review any resulting graphs or outputs.

The most popular is the Jupyter notebook, an open-source notebook designed for data science and machine learning experimentation. While Jupyter is a top choice for many data scientists, it does not perfectly solve issues around communicating and collaborating on experiments. To solve these problems, notebooks like Observable, Hex.tech, Deepnote, and Noteable have emerged that allow for more powerful visualizations and collaboration.

Noteable, for example, allows users with varying degrees of technical proficiency to collaborate on the whole data lifecycle, not only the outcomes. This capability has become important for use cases like ETL workflows, exploration and visualization of data, and machine learning modeling where cross-functional teams are interacting in the same tool to make decisions and glean insights. The creation of this shared context encourages more exploration, improves productivity, and reduces communication costs.

Data Science Platforms

For more complex models requiring deployment at scale, data scientists may also select a more end-to-end data science platform with built-in infrastructure for building, testing and shipping models. For organizations that have already implemented Apache Spark, Databricks is often the tool of choice. Databricks allows data scientists to instantly spin up Spark clusters and access a suite of tools for ETL, processing and querying, and model creation and deployment. Databricks offers numerous products (like MLFlow for end-to-end model creation, tracking and deployment, or Databricks SQL for running serverless SQL queries on Databricks Lakehouses).

Additional data science platforms include DataRobot and Dataiku, both of which offer out-of-the-box data preparation tools, models, automated model selection, training and testing, as well as model deployment and monitoring. DataRobot is more focused on allowing any user to work with out-of-the-box AutoML, while Dataiku allows more technical users to access end-to-end tooling for data science with AutoML as a feature. While DataRobot’s platform abstracts away some complexity, with Dataiku users can edit specific features and models.

Case study: Answering ambitious questions with Coiled

An increasing number of platforms are emerging to allow data scientists to use their preferred frameworks and languages to build and manage data pipelines. Our portfolio company Coiled is another emerging data science platform, founded by the creator of the open-source project Dask. Dask allows data scientists to work with increasingly large datasets without having to request assistance from data engineers, by enabling data scientists to parallelize their data with Python.

Prior to Coiled, data scientists would need the skills of a developer to spin up their own compute cluster. However, Coiled abstracts away this work, by automating the process of setting up and managing their Dask cluster. Data scientists can use their Dask dataframe locally but run the Coiled command to scale their workloads automatically without needing to worry about managing their compute. Coiled also offers an interface for users to monitor hosting costs and manage authentication for various datasets. In the future, platforms like Coiled will be able to build specific tools that automate downstream processes for querying, model building, and deployment.

What is next for data science

We’re excited about multiple categories of tools for data scientists and intrigued by the platforms that solve both the technical and organizational problems facing this technical specialty. On the technical front, there’s vast opportunity for new tools to help with transforming data in varied formats, processing increasingly large data sets, and configuring the right pipelines and models. These include frameworks for transforming data in data scientists’ preferred languages, processing engines for larger or real-time datasets, and platforms designed to enable a user to quickly spin up the right model in minutes. We are paying close attention to the evolution of use cases for real-time data, and observing more organizations attempt to replace batch data with real-time data in their data pipelines to improve the accuracy of their models. On the organizational side, we see an opportunity for a new generation of notebooks to improve collaboration for data scientists by enabling easier sharing and the ability to edit in real-time with colleagues.

Just as we’ve seen businesses become more developer-centric over the past decade, data scientists will also inherit the same gravitational pull within their organizations. If you are working on a product improving workflows for data scientists, please reach out to us at datainfra@bvp.com.