Bessemer Predicts: Robotics and physical AI

Six investor predictions on the state of robotics research and the commercialization of the physical AI ecosystem—plus founders' candid takes on what's evolving in the market in 2026.

“There will be 100,000x more robots on Earth in the next 10-20 years.”— Jeremy Levine, Partner at Bessemer Venture Partners

At a glance: Six predictions for robotics and physical AI in 2026

- We're in the GPT-2.5 moment for robotics. Capabilities are real, but the gap between lab performance and field deployment remains wide.

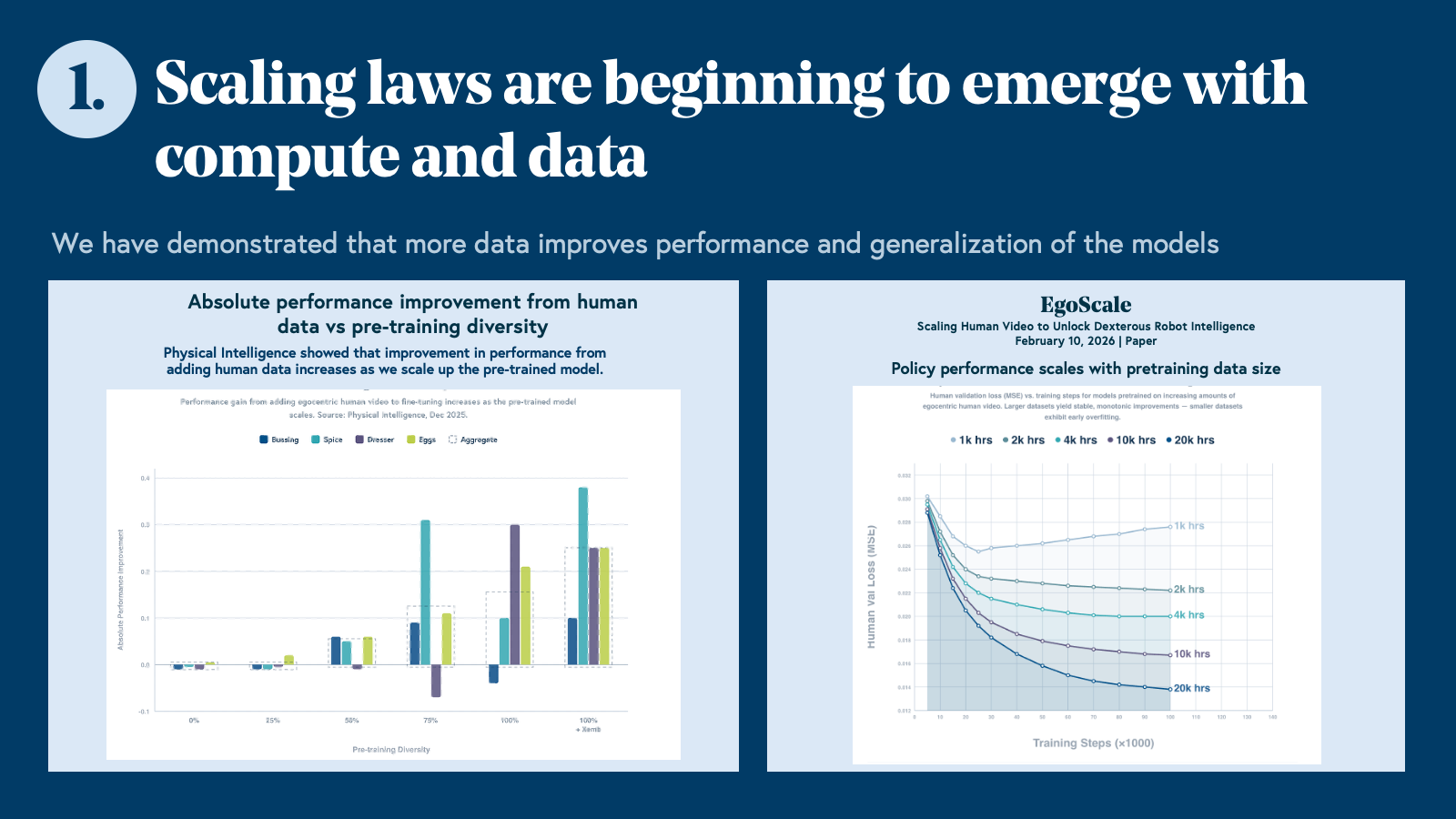

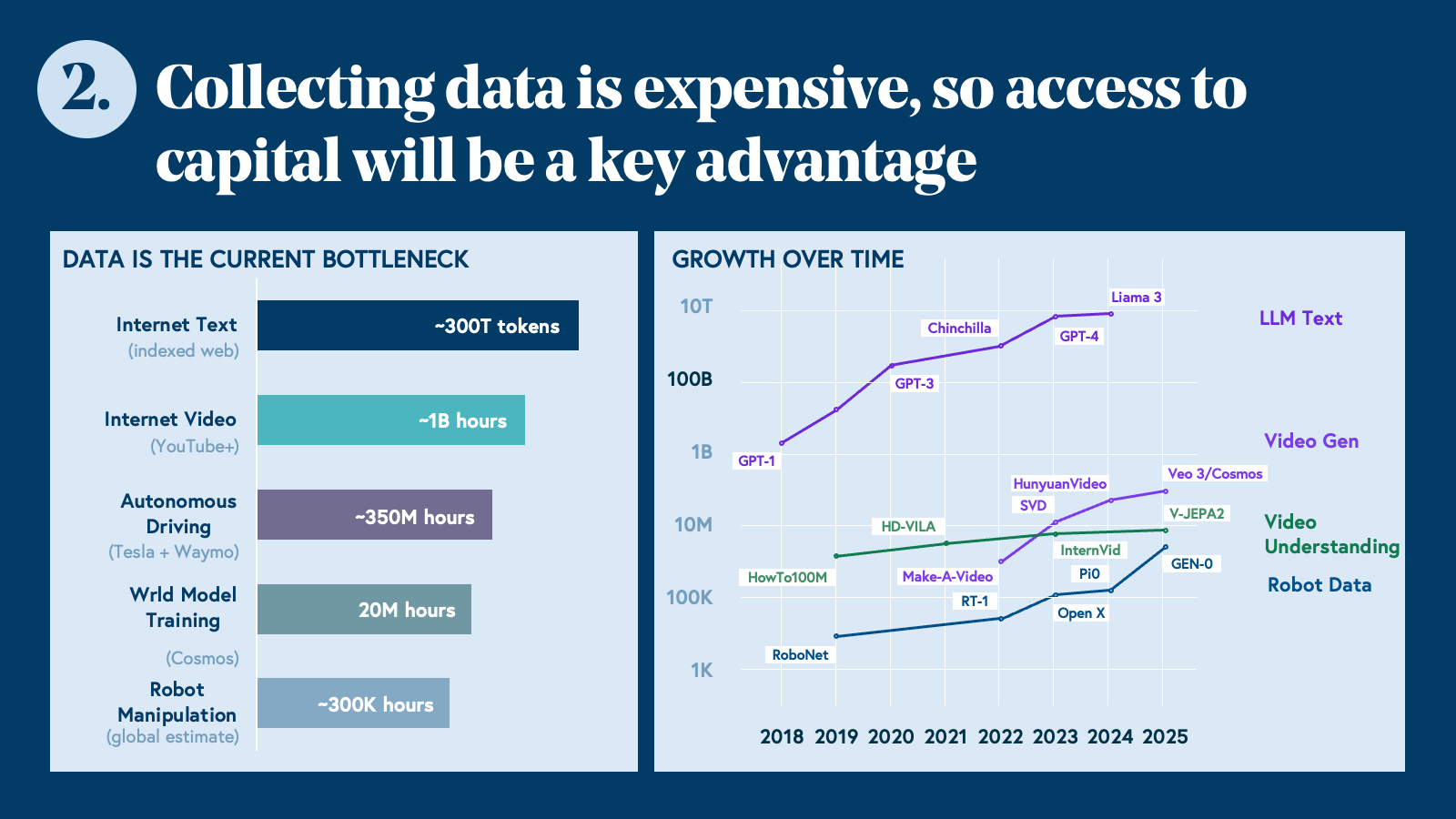

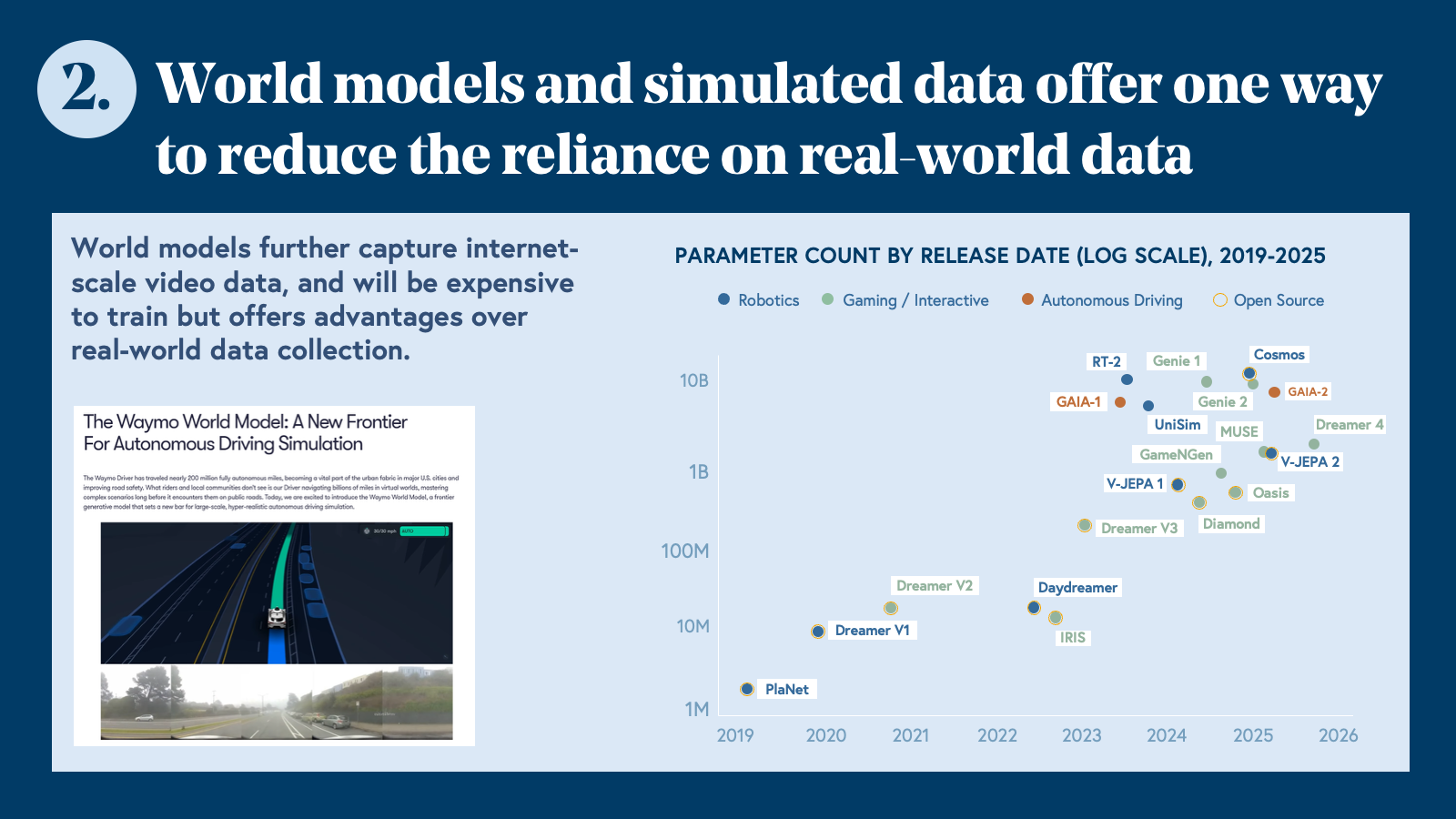

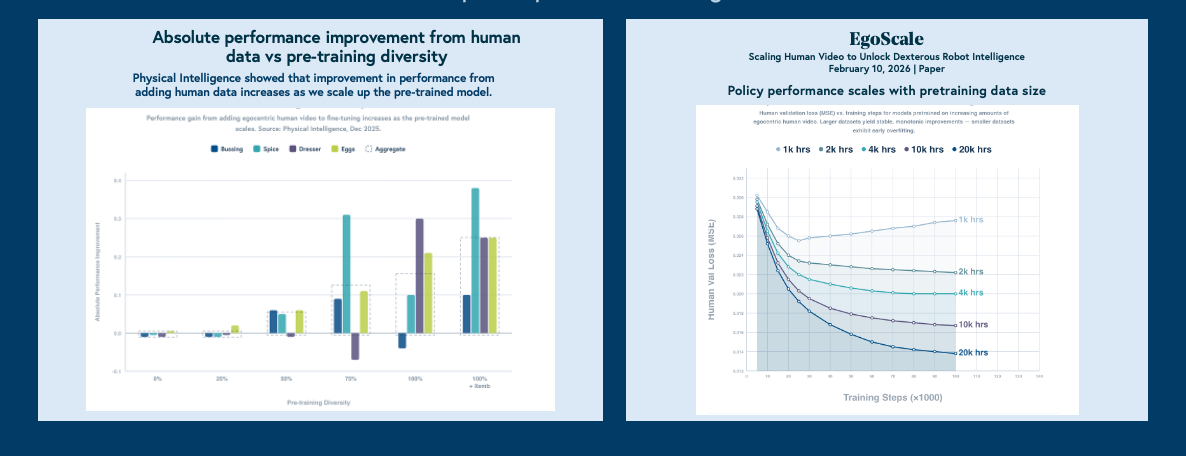

- Scaling laws are emerging. Data is expensive, capital is the moat. World models may be the shortcut.

- Talent concentration will crown winners quickly. This is not a market where 50 companies win.

- Near-term value will accrue to full-stack, vertically integrated players, not pure-play foundation model companies.

- Defense robotics will produce the first $50B+ IPOs in the category.

- There will be no robotics bubble. In fact, not enough capital is flowing into the industry.

Prediction 1: The ChatGPT moment for robotics is coming, but we're not there yet

The robotics industry is in its GPT-2.5 moment: foundation models are demonstrating real capability, scaling laws are beginning to emerge, but the gap between lab demos and production deployment remains wide.

Robotics is at an analogous moment. The demos are real. The underlying models are improving. The scaling laws that defined the LLM era are beginning to show up in robotics data. But generalized, reliable deployment in the physical world? That's still ahead of us.

"The path to real-world robotics isn't better control algorithms — it's better foundational models that understand the physical world: models that see, reason about space and physics, and predict what should happen next. Robot control becomes a thin layer on top. Companies collecting robot demos and fine-tuning policies can solve narrow tasks, but they will not scale. The foundation is what matters."— Armen Aghajanyan, Co-Founder & CEO, Perceptron

Getting to that generalized moment requires solving a problem even harder than the models themselves: data.

Prediction 2: Scaling laws are emerging. Data is expensive, capital is the moat—and world models may be the shortcut.

Robotics data is orders of magnitude scarcer than internet text — and closing that gap requires capital at a scale that will quickly separate the well-funded from the rest.

"Teleop alone will not be a successful data strategy. You should pull data from the internet or from simulators with reinforcement learning — you'll never get the scale or diversity you need from teleop alone."— Ian Glow, CEO, Zeromatter

"What separates the leaders from the hype in physical AI is this: they don't chase scale, they obsess over data quality. When you're building systems that operate in the physical world, bad data isn't just inefficient—it's dangerous. The defining advantage will be the companies with the strongest data flywheel, turning robot data into better decisions, better model improvements, and better deployments faster than everyone else."— Brian Moore, CEO & Founder, Voxel51

Prediction 3: Talent concentration will crown winners fast—this is not a market where 50 companies win

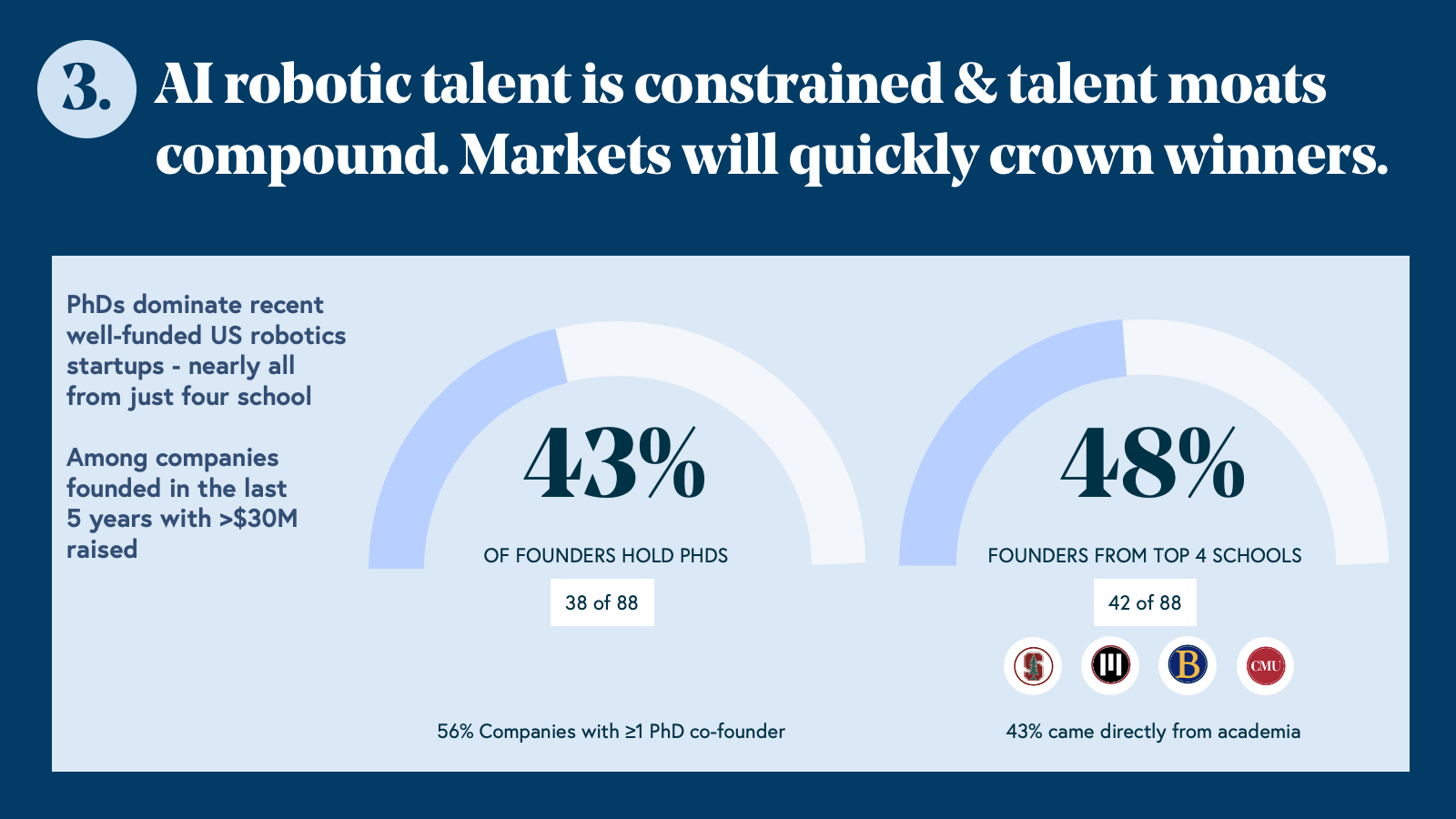

Among US robotics companies founded in the last five years that have raised $30M or more, 43% of founders hold PhDs. Nearly half (48%) come from just four institutions: Stanford, MIT, Berkeley, and Carnegie Mellon University. 56% of these companies have at least one PhD co-founder, and 43% came directly from academia. Robotics doesn’t have a broad-based talent ecosystem. It’s a narrow pipeline producing a small number of exceptionally capable people, and those people are already choosing sides.

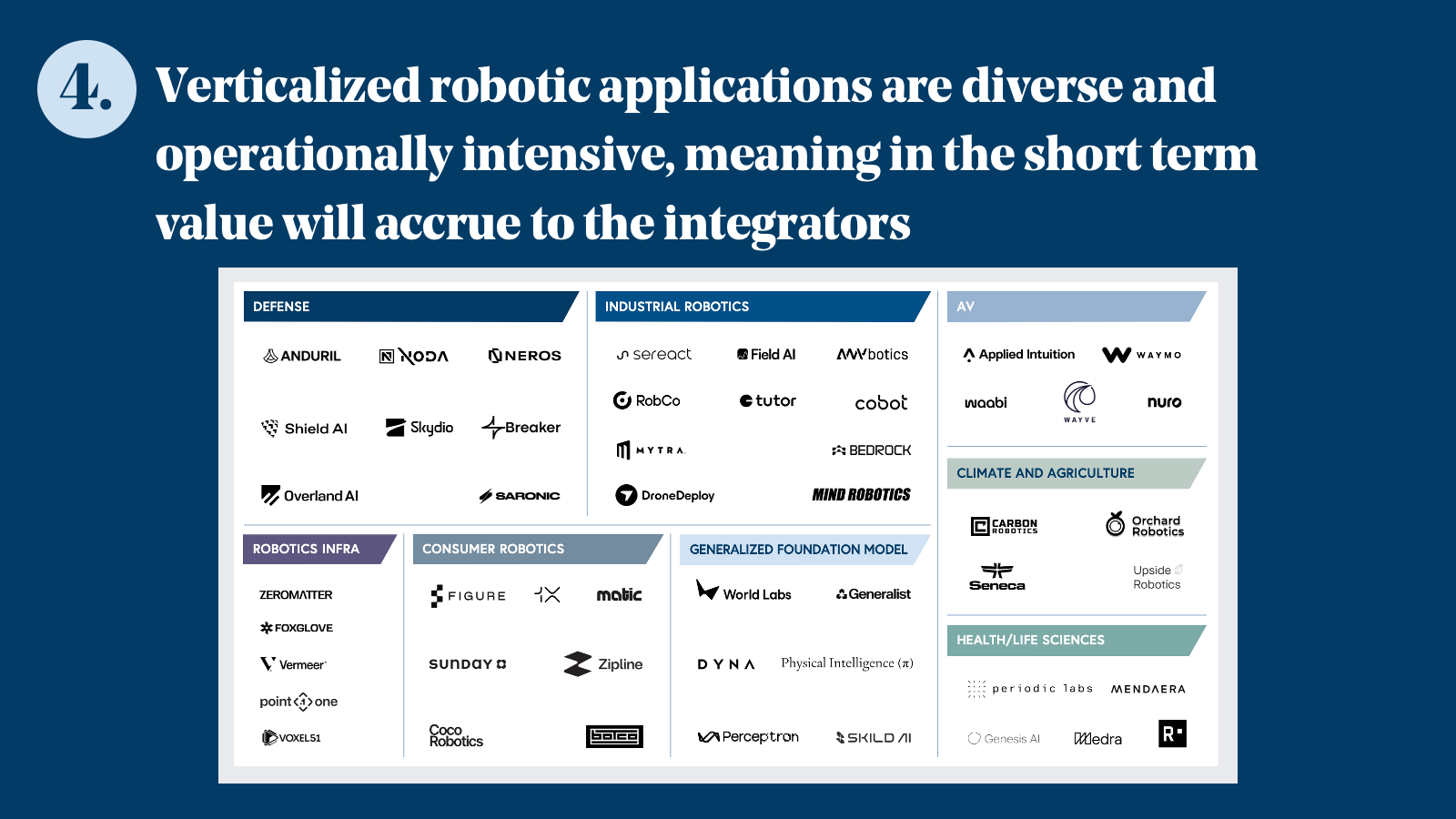

Prediction 4: Full-stack players will capture near-term value— pure-play foundation model companies will need to wait

Unlike the LLM era, where horizontal foundation model companies captured outsized value early, robotics foundation models aren't yet general enough to work out of the box—giving vertically integrated players a meaningful head start.

"The hardware cost curve is a major reason enterprise robotics is finally scaling. In construction, ground robots that ran $100,000 per unit a few years ago now run the same workflows for under $15,000. Docked drones have dropped from $200,000 to under $20,000 while getting more capable. Hardware needs to get cheap enough before deployment can scale — and we're crossing that threshold now."— Mike Winn, CEO & Co-Founder, DroneDeploy

"The defining advantage in physical AI will not be model novelty, but the quality of the data infrastructure behind it. As models converge, the companies that win will be the ones with the strongest data flywheel that turns robot data into better decisions, better model improvements, and better deployments faster than everyone else."— Adrian Macneil, Co-Founder & CEO, Foxglove

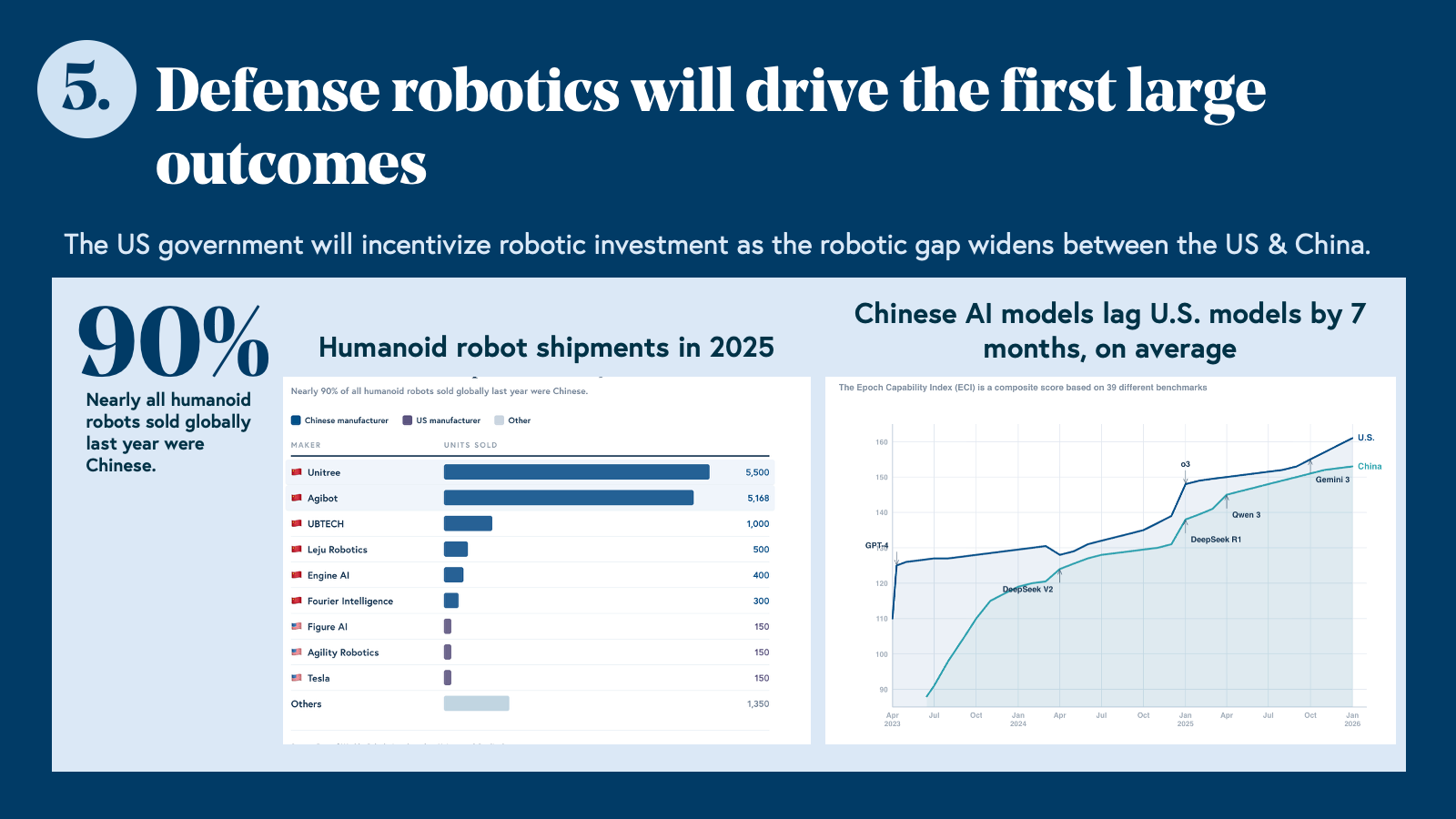

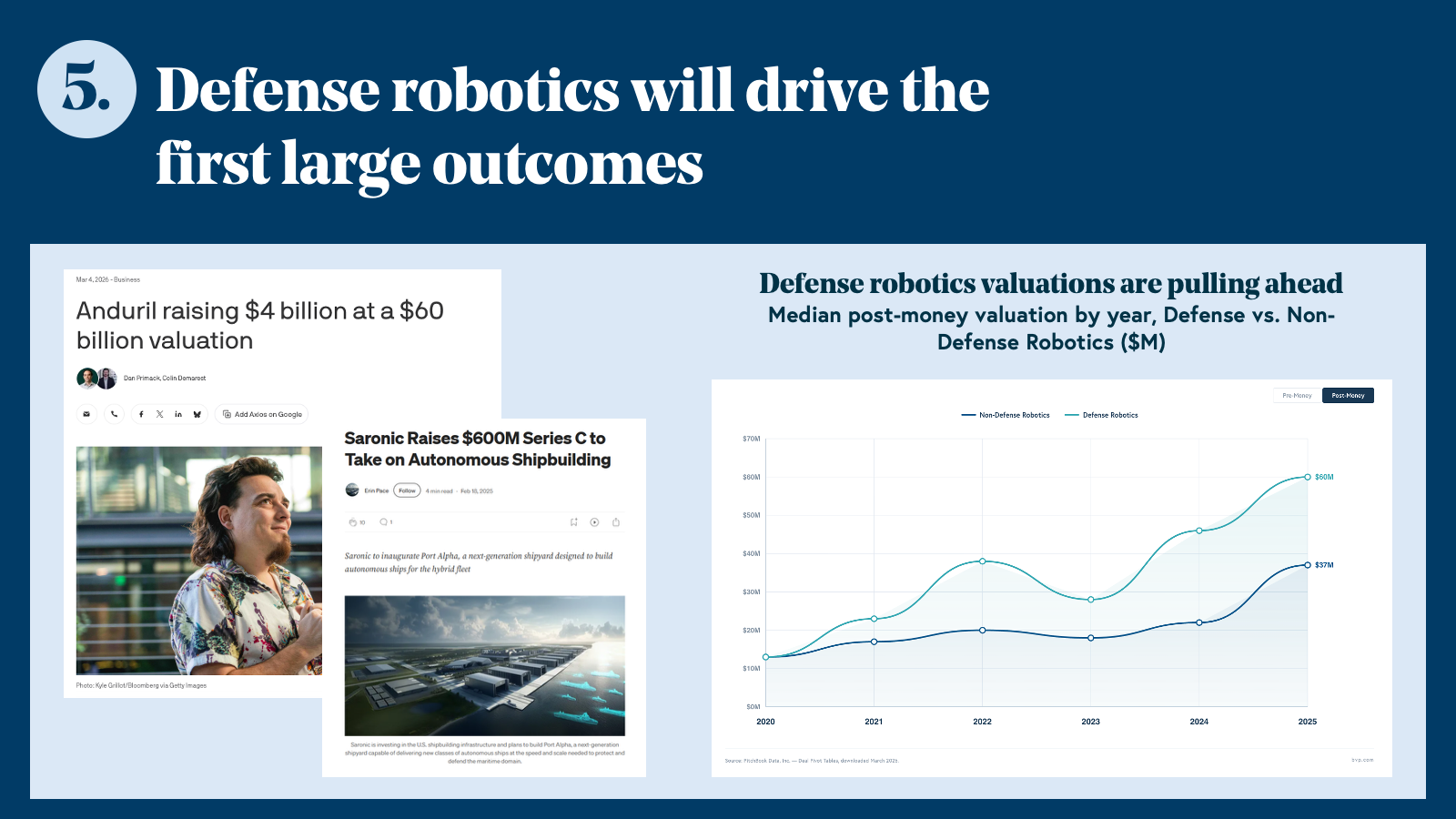

Prediction 5: Defense robotics will drive the first $50B+ IPOs in the category

Defense robotics valuations are already pulling ahead of non-defense peers—and geopolitical tailwinds, national security urgency, and government-backed capital will drive the first landmark public outcomes in robotics.

The gap is visible in the data. Median Series A post-money valuation for defense robotics companies reached $105M in 2025, compared to $50M for non-defense—a gap that has widened every year since 2021.

Anduril closed at a $60 billion valuation in March 2026. Saronic raised a $1.75 billion Series D for autonomous shipbuilding the same month. These are not outliers. They are leading indicators of a category beginning to produce the kinds of outcomes that define a decade of venture returns.

The structural reasons are straightforward. Defense procurement cycles, while long, are predictable—contracts are large, renewal rates are high, and switching costs are significant. The customer has both the budget and the urgency to deploy at scale. Unlike commercial robotics, where enterprises weigh ROI carefully and move slowly, defense buyers operate under a different calculus: capability gaps carry national security consequences, and the cost of inaction is measured in strategic risk, not dollars.

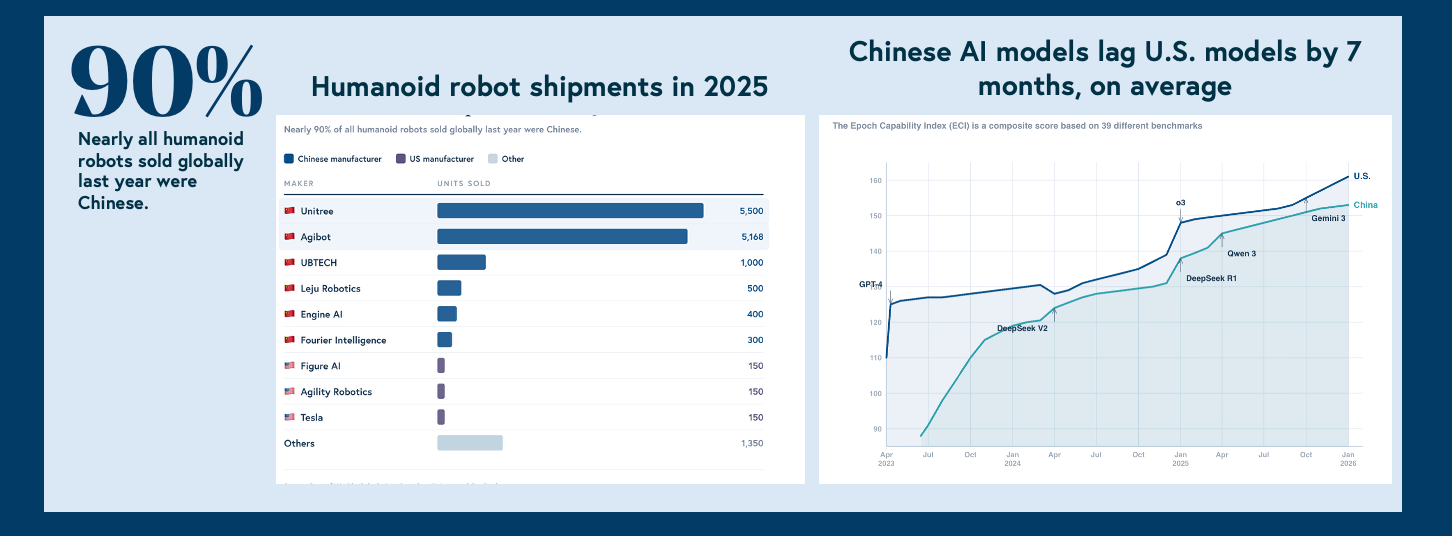

The geopolitical dimension amplifies this. Nations have reached a conclusion that's becoming impossible to ignore: robotics fundamentally changes the nature of modern warfare. Nearly 90% of humanoid robots sold globally in 2025 were Chinese-manufactured.

Chinese AI models lag US models by approximately seven months on average—a gap that has narrowed consistently. The same dynamic that drove semiconductor and satellite investment is now playing out in autonomous systems, and the US government is beginning to treat robotics as a national security imperative.

The dual-use dimension deserves honest acknowledgment. The most defensible companies in this space aren't building single-purpose weapons systems—they're building autonomous platforms, perception systems, and decision-making infrastructure with genuine commercial application. The line between defense and commercial isn't always clean, and founders navigating this space are making real choices about where to draw it.

"The dual-use question is real, and it's not going away. The most interesting companies in this space aren't choosing between defense and commercial — they're building systems capable enough for defense requirements that also happen to be transformative in commercial contexts. Where the line sits depends on the technology, the customer, and the founder's own judgment about what they're willing to build."

— Matthew Buffa, Co-Founder, Breaker

If defense is already producing these outcomes, why do so many observers still call this a bubble? Because we don't think there is one.

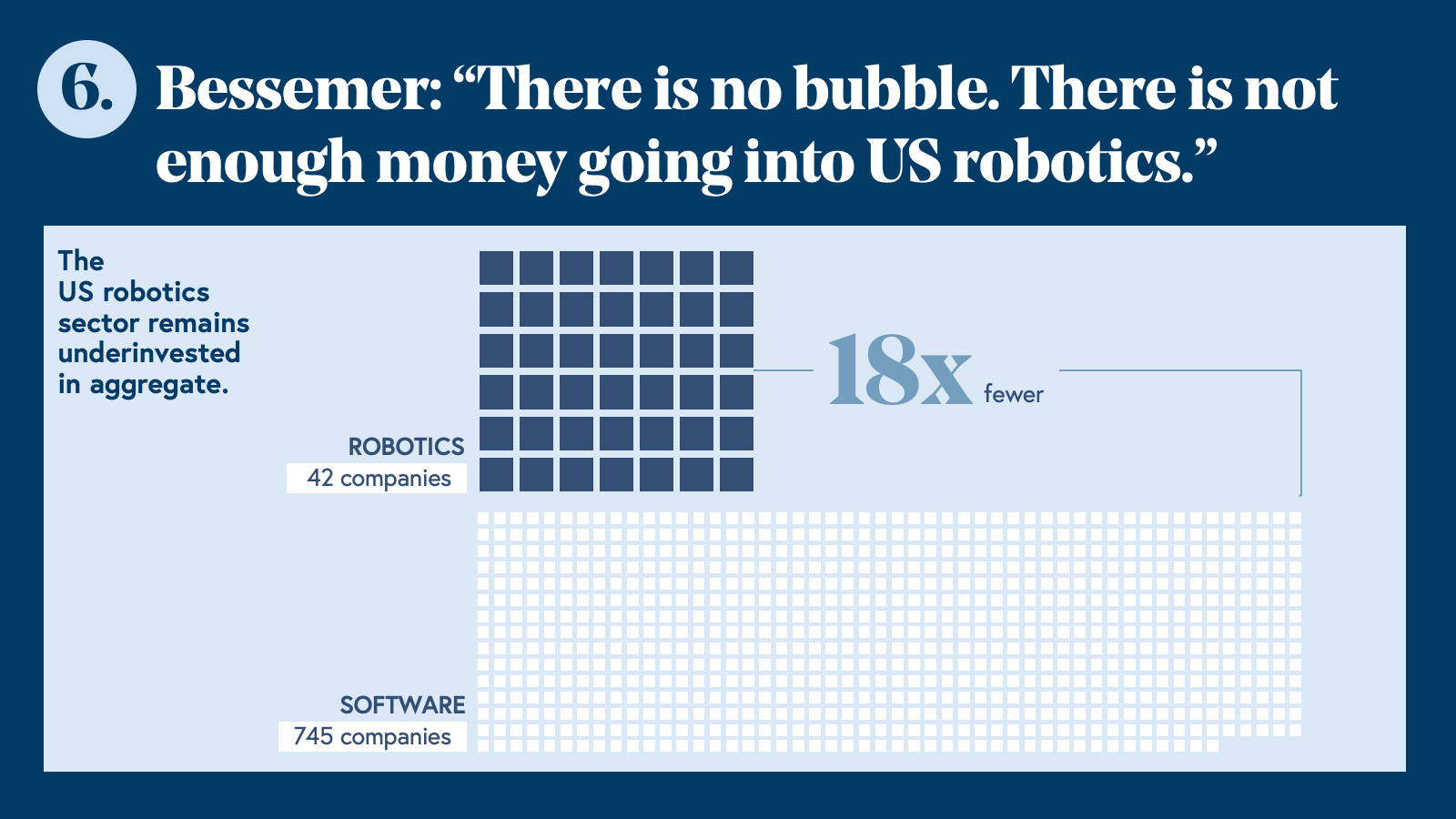

Prediction 6: There is no robotics bubble. There is not enough money going into this space.

Every few weeks, a funding announcement prompts the same reaction: surely this is too much. A humanoid robot company raising at a billion-dollar valuation before shipping a product. A defense autonomy platform closing a round larger than most Series C software companies. The bubble narrative writes itself—but it’s also wrong.

In the past five years, 745 software companies have raised more than $30M. The equivalent figure for robotics is 42—18 times fewer funded companies in a sector where the underlying market is 30 times larger than software spend globally. Even accounting for the capital intensity of hardware businesses, robotics is structurally underinvested relative to its addressable opportunity. What looks like a bubble is, in aggregate, catch-up capital flowing into a category that venture has underfunded for decades.

The growth projections reinforce this. Most analysts project 50x industry growth over the next decade. We think that estimate anchors on automating existing workflows rather than accounting for the entirely new categories of economic activity that capable, general-purpose robots will create. Transformative technology doesn't expand linearly—it creates markets that didn't previously exist.

None of this means every company being funded will succeed. Some valuations are stretched. Not every humanoid robot company will find a viable path to deployment at scale. As we argued in Prediction 3, capital will consolidate around a small number of leaders, and the companies that don't make that cut will not find soft landings. Selectivity still matters.

But selectivity is different from scarcity. The overall level of investment in robotics—relative to the size of the opportunity and the pace of capability development—remains well below where it should be. The window to back foundational companies is open now, before the ChatGPT moment arrives and before talent consolidation completes. Waiting for proof of the inflection is a sure-fire way to miss it.

"Five years from now, the majority of robots deployed globally won't be built by the startups we know today. They will be built by companies that haven't even started building robots yet—but who know how to do it at scale."

— Nikita Rudin, CEO & Founder, Flexion

That's the bet. The companies that will define physical AI over the next decade are being founded right now — and many of them don't exist yet.

Gaps and open debates in robotics and physical AI

The six predictions above reflect our highest-conviction views. But there are still many open questions that will shape how the ecosystem shapes out in the coming three years.

The reliability gap. Getting from 80% task success to 99.9% is not a linear problem. The last 20% requires fundamentally different approaches—tactile sensing, force feedback, better sim-to-real transfer for manipulation—and it takes longer than most timelines assume. This isn't a reason for pessimism. It's a reason to be precise about which problems are engineering challenges and which require new science, infrastructure, and tooling.

"We are nowhere near 'solving' the data problem in robotics. My experience at Waymo taught me that real-world deployment uncovers harder, more specialized data curation and labeling problems over time. Closing the gap between 99% and 99.9% reliability is a steep hill climb that takes longer than most people realize."

— Lisa Yan, Co-Founder & CEO, Argus Systems

The inference cost question. World models and large vision-language-action models are expensive to run in real time. Unlike text models, which batch requests across thousands of concurrent users on shared infrastructure, robotics models must generate an environment state every few milliseconds per robot, meaning each deployment effectively requires a dedicated GPU pipeline. We’ve seen remarkable progress in inference innovations, and LLM inference costs dropped roughly 1,000x in three years. Whether robotics inference follows a similar curve, and how fast, will determine which foundation model approaches are commercially viable at scale. We think the curve will steepen, but the exact timeline is unclear.

Interpretability as the next infrastructure layer. As capital flows into world models and foundation models at scale, a class of problems is emerging that the industry hasn't fully reckoned with: these models are largely opaque, and opacity is not acceptable in physical systems where failure has real-world consequences.

"Around $6B flowed into six or seven world model companies in Q1 2026 alone. As the industry matures, interpretability becomes non-negotiable. These models are black boxes right now. Expect a wave of startups building the tools to open them up."

— Mahesh Krishnamurthi, Co-Founder, Vayu Robotics

Open-source vs. closed. The LLM era demonstrated that open-source can dramatically accelerate ecosystem development and democratize access to capability. Whether the same dynamic plays out in robotics—where physical data and deployment infrastructure matter as much as model architecture and approach—is yet to be seen. Our working view: open-source will commoditize model architecture faster than most expect, but the data and deployment layers will remain proprietary long enough to matter. The companies that understand which parts of their stack to open and which to protect will have a meaningful strategic advantage over those that don't.

Tale of two truths in the future of robotics

The founders closest to this technology hold what sound like contradictory views—and they're not wrong.

"The ChatGPT moment for robotics is coming faster than most people realize. When it hits, the bottleneck will be production hours—real robots, real work, real environments. The companies that optimize for deployment over demos will separate decisively from the field."

— Brad Porter, CEO & Founder, Cobot

"Timelines are a lot longer than most people expect. General-purpose robotics is still five-plus years out."

— Philipp Wu, Co-Founder, stealth robotics company

Both are right, but they're describing different things. What Porter is describing is the path to the inflection. The ChatGPT moment is coming, and the companies that define it will be the ones compounding real deployment hours today. Production velocity isn't an alternative to the generalized prize; it's how you earn the right to build it.

Wu describes how far away that inflection actually is. The generalized moment—where a single robot performs meaningfully across tasks and environments without domain-specific fine-tuning—is still further out than most timelines assume. The research gaps are real, and the capital requirements are significant.

These aren't competing predictions. They're a map for founders: deploy decisively now and build with the generalized moment as your horizon.

The inflection is coming. The talent is migrating, the hardware is commoditizing, and the data infrastructure is being built. The companies that will define physical AI over the next decade are being founded and funded right now.

If you're building in this space, we want to hear from you. Tell us what you think about these predictions. Reach out to our robotics team at robotics@bvp.com.